Computer security may be defined as a system of safeguards designed to protect a computer system and data from deliberate or accidental damage or access by unauthorized persons or users. That means safeguarding the system against such threats as natural disasters, fire, accident, vandalism, theft or destruction of data, industrial espionage, hackers and various forms of white-collar crimes is paramount.

Security also refers to protection of computer-based resources – hardware, software, data, procedures, and people against alteration, destruction or unauthorized use.

The requirements for security in computer installations can be looked at from a number of aspects, such as physical security, terminal security, data security, programs/software security, procedure security, communication and network security.

Physical security: There is a strong tendency to treat these electronic giants as expensive toys to be shown off to any important customers and visitors. There is therefore need to provide physical security in every computer centre.

Unauthorized access: Doors to the computer room should be locked at all times, and only one door should be used for normal access and egress. Changes in access rules and requirements should be effected whenever there is security violation or an apparent threat from some source, e.g., some hard disks were removed from computer centre of a bank.

Limited access: Security is also attitudinal, and employees of the computer centre should be encouraged to challenge all strangers within the operating area or computer centre. Furthermore, tours of the computer centre by customers, school groups or senior citizens should NOT be allowed. The fewer the people that enter the area, the lesser the risk of a security breach.

Use of back-up files and records: The loss of important records such as account receivable can prove to be a real disaster to a company. Personal lockers should be provided for operating personnel within the computer area. Tapes and disk files should not leave the computer room except to go to the library. Passes should be required for any such records that may have to leave the building for other locations.

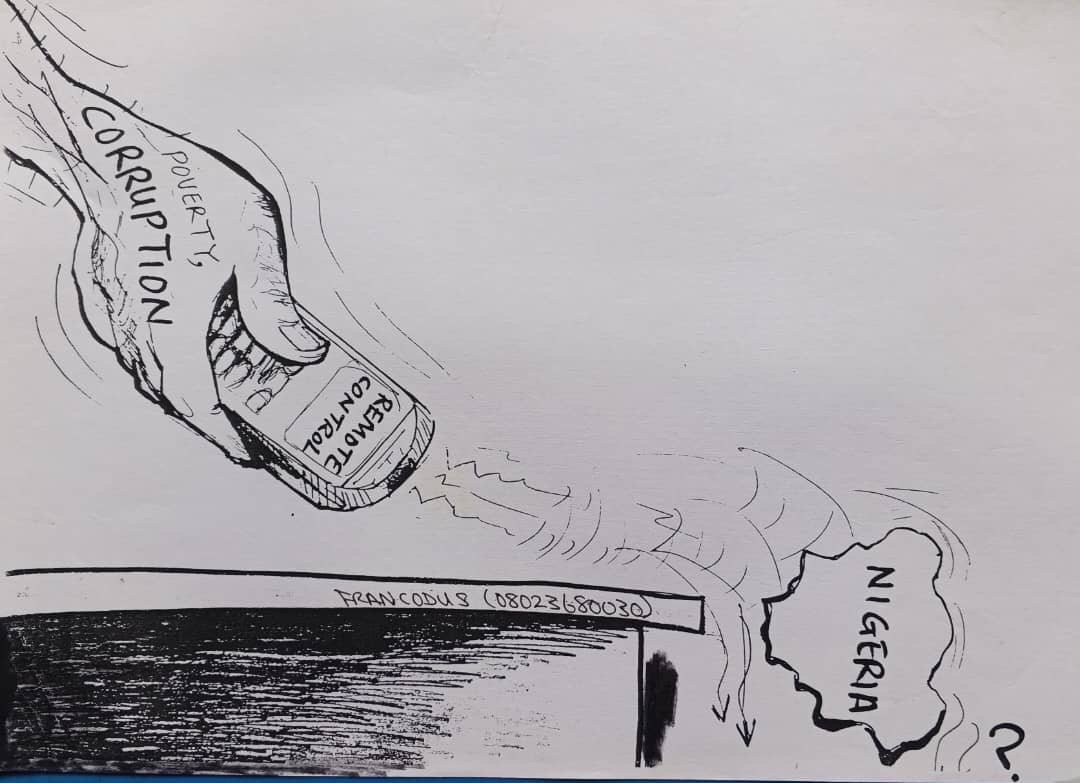

Computers can be reached from remote sites, either in the same building or many miles away. Terminal security for access to the computer can be as important as providing physical security in the form of controlled access to the computer centre.

There are many ways that data files maintained by the company may be compromised. Some of these ways may prove to be embarrassing to those involved. A company has a general responsibility to keep certain Information confidential and may be exposed to legal liability in such cases where it may be proved negligent. This is necessary in case there is fire outbreak at the computer centre.

Security starts with hiring responsible personnel who are capable of doing the work properly and are well trained in what they do. Reference checks should be made for all new hires, and competence should be tested before the individuals are added to the payroll. It is well to have someone keeping their ear to the ground, as adverse information is often available if there is someone to listen.

Planning and scheduling a computer installation is useful in controlling the work that is done as well as making for unscheduled work to be done. This will help to keep track of people who come to the computer centre. It is important to have regular back-up procedure whereby processed files and programmes are copied into tapes or other disk files and are taken to a secure place such as bank vault or a fireproof safe in another geographical location.

Just as the transmission of data within the central processing unit is checked through the use of various hardware controls, so it is important to be sure that communication with other internal and external locations is sufficiently secure that the data are transferred accurately. It should be noted that during data communication, only the right parties are getting the message that is being sent.

The message should be preceded by a valid header which could contain the length of the message. This can be checked by the receiving computer to be sure that the whole message has been received.

Network poses a unique security problem. Many people have access to the system, often from remote locations. To begin with, Network Operating Systems provide basic security features, such as user identification and authentication, probably by password.

Network supervisors must assign varying access rights to individual users. All users, for example, could access word processing software, but only certain users could access payroll files. Some network software can limit how many times users can call up a particular file and generate an audit trail of who looked at which files. This will help to monitor who logs in or accesses any file(s).

In addition to monitoring access to the network, organizations must be concerned about unauthorized people intercepting data in transit, possibly thieves, hackers or industrial spies. Data being sent over communications lines may be scrambling the message, that is, putting them in code that can be broken only by the person receiving the message. An example, correspondence between two executives or presidents.

The process is called encryption can be used to protect your systems. Encryption software is available for personal computers. A typical package has security features such as file encryption, keyboard and password.

Sophisticated users of most systems are aware of at least one way to crash the system, denying other users authorized access to stored information. Whatever the level of functionality provided, the usefulness of a set of protection mechanisms depends upon the ability of a system to prevent security violations.

Experience has provided some useful principles that can guide the design and contribute to an implementation without security flaws.

Here are eight examples of design principles that apply particularly to protection mechanisms:

Economy of mechanism: Keep the design as simple and small as possible. As a result, techniques such as a line-by-line inspection of software and physical examination of hardware that implements mechanisms are necessary. For such techniques to be successful, a small and simple design is essential.

Fail-safe defaults: Base access decisions on permission rather than exclusion. This principle identifies conditions under which access is permitted. A conservative design must be based on arguments why objects should be accessible, rather than why they should not. A design or implementation mistake in a mechanism that gives explicit permission tends to fail by refusing permission, since it will be quickly detected.

Complete mediation: Every access to every object must be checked for authority. It gives wide view of access control, which in addition to normal operation includes initialization, recovery, shutdown and maintenance. It implies that a fool-proof method of identifying the source of every request must be devised. If a change in authority occurs, such remembered results must be systematically updated.

Open design: The design should not be secret. The mechanisms should not depend on the ignorance of potential attackers, but rather on the possession of specific, more easily protected keys or passwords. In addition, any sceptical user may be allowed to convince himself that the system he is about to use is adequate for his purpose. Finally, it is simply not realistic to attempt to maintain secrecy for any system that receives wide distribution.

Separation of privilege: Where feasible, a protection mechanism that requires two keys to unlock it is more robust and flexible than one that allows access to the presenter of only a single key. This principle is often used in bank safe-deposit boxes. It is also at work in the defence system that fires a nuclear weapon only if two different people both give the correct command. In a computer system, separated keys apply to any situation in which two or more conditions must be met before access should be permitted.

Least privilege: Primarily, this principle limits the damage that can result from an accident or error. It also reduces the number of potential interactions among privileged users to the minimum for correct operations, so that unintentional, unwanted, or improper uses of privilege are less likely to occur. Thus, if a question arises related to misuse of a privilege, the number of users that must be audited is minimized. The military security rule of ‘need-to-know’ is an example of this principle.

In conclusion the computer system provides a powerful tool for the processing and storage of information and, at the same time, a powerful tool for the misuse of the same information. Even the most sophisticated computer systems are vulnerable if proper controls are not put in place to prevent unauthorized access to the system.